Generative AI took the consumer landscape by storm in 2023, reaching over a billion dollars of consumer spend1 in record time. In 2024, we believe the revenue opportunity will be multiples larger in the enterprise.

Last year, while consumers spent hours chatting with new AI companions or making images and videos with diffusion models, most enterprise engagement with genAI seemed limited to a handful of obvious use cases and shipping “GPT-wrapper” products as new SKUs. Some naysayers doubted that genAI could scale into the enterprise at all. Aren’t we stuck with the same 3 use cases? Can these startups actually make any money? Isn’t this all hype?

Over the past couple months, we’ve spoken with dozens of Fortune 500 and top enterprise leaders,2 and surveyed 70 more, to understand how they’re using, buying, and budgeting for generative AI. We were shocked by how significantly the resourcing and attitudes toward genAI had changed over the last 6 months. Though these leaders still have some reservations about deploying generative AI, they’re also nearly tripling their budgets, expanding the number of use cases that are deployed on smaller open-source models, and transitioning more workloads from early experimentation into production.

This is a massive opportunity for founders. We believe that AI startups who 1) build for enterprises’ AI-centric strategic initiatives while anticipating their pain points, and 2) move from a services-heavy approach to building scalable products will capture this new wave of investment and carve out significant market share.

As always, building and selling any product for the enterprise requires a deep understanding of customers’ budgets, concerns, and roadmaps. To clue founders into how enterprise leaders are making decisions about deploying generative AI—and to give AI executives a handle on how other leaders in the space are approaching the same problems they have—we’ve outlined 16 top-of-mind considerations about resourcing, models, and use cases from our recent conversations with those leaders below.

Resourcing: budgets are growing dramatically and here to stay

1. Budgets for generative AI are skyrocketing.

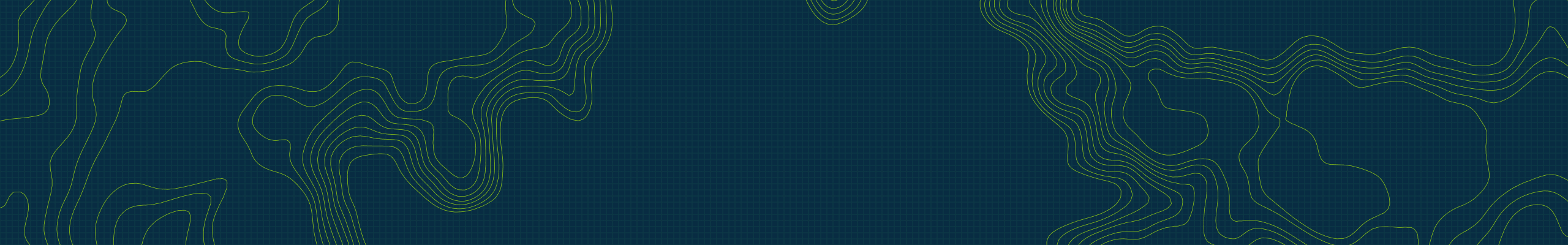

In 2023, the average spend across foundation model APIs, self-hosting, and fine-tuning models was $7M across the dozens of companies we spoke to. Moreover, nearly every single enterprise we spoke with saw promising early results of genAI experiments and planned to increase their spend anywhere from 2x to 5x in 2024 to support deploying more workloads to production.

2. Leaders are starting to reallocate AI investments to recurring software budget lines.

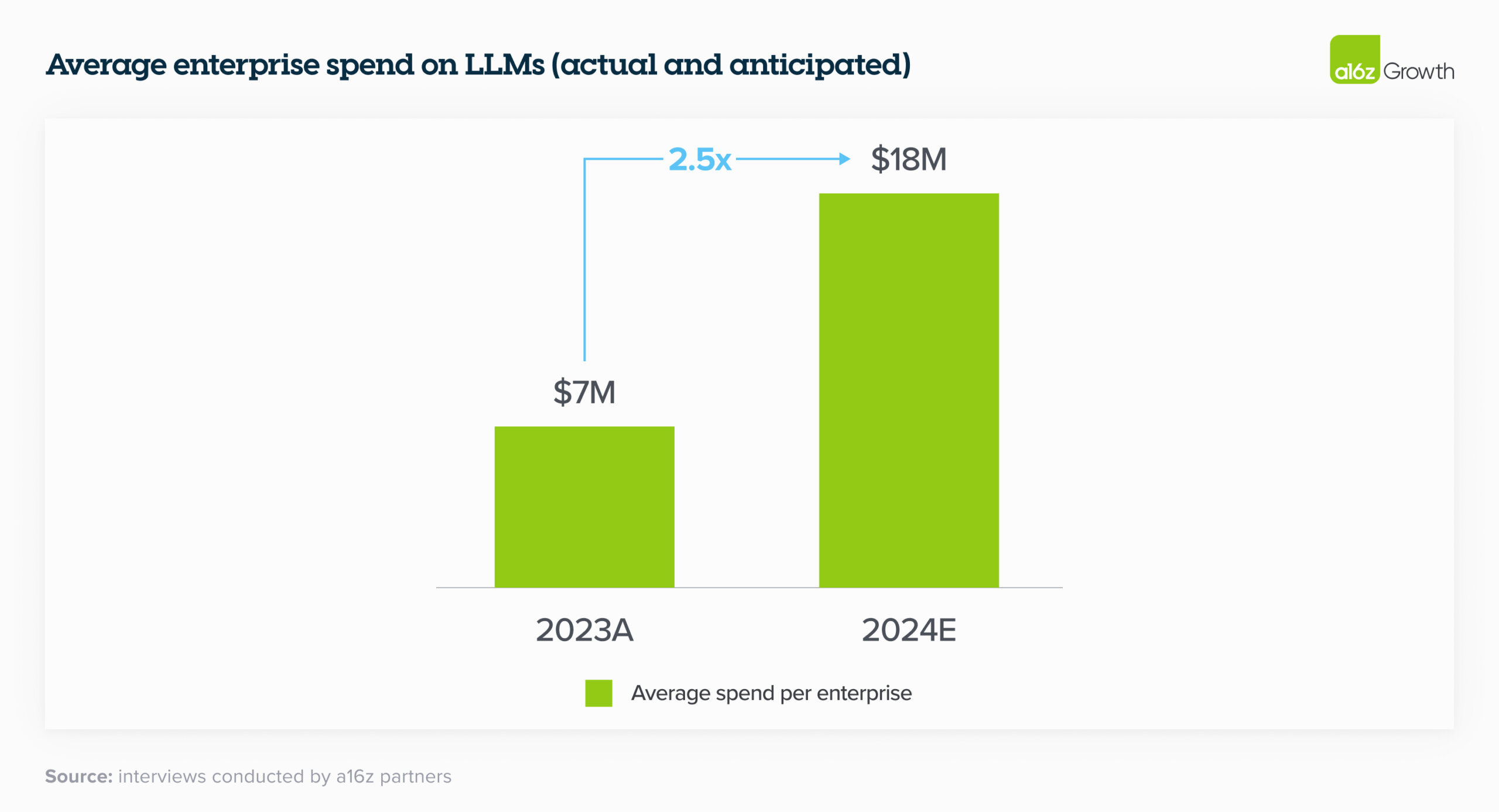

Last year, much of enterprise genAI spend

unsurprisingly came from “innovation” budgets and other typically one-time pools

of funding. In 2024, however, many leaders are reallocating that spend to more

permanent software line items; fewer than a quarter reported that genAI spend

will come from innovation budgets this year. On a much smaller scale, we’ve also started to see

some leaders deploying their genAI budget against headcount savings,

particularly in customer service. We see this as a harbinger of significantly

higher future spend on genAI if the trend continues. One company cited saving

~$6 for each call served by their LLM-powered customer service—for a total of

~90% cost savings—as a reason to increase their investment in genAI eightfold.

Here’s the

overall breakdown of how orgs are allocating their LLM

spend:

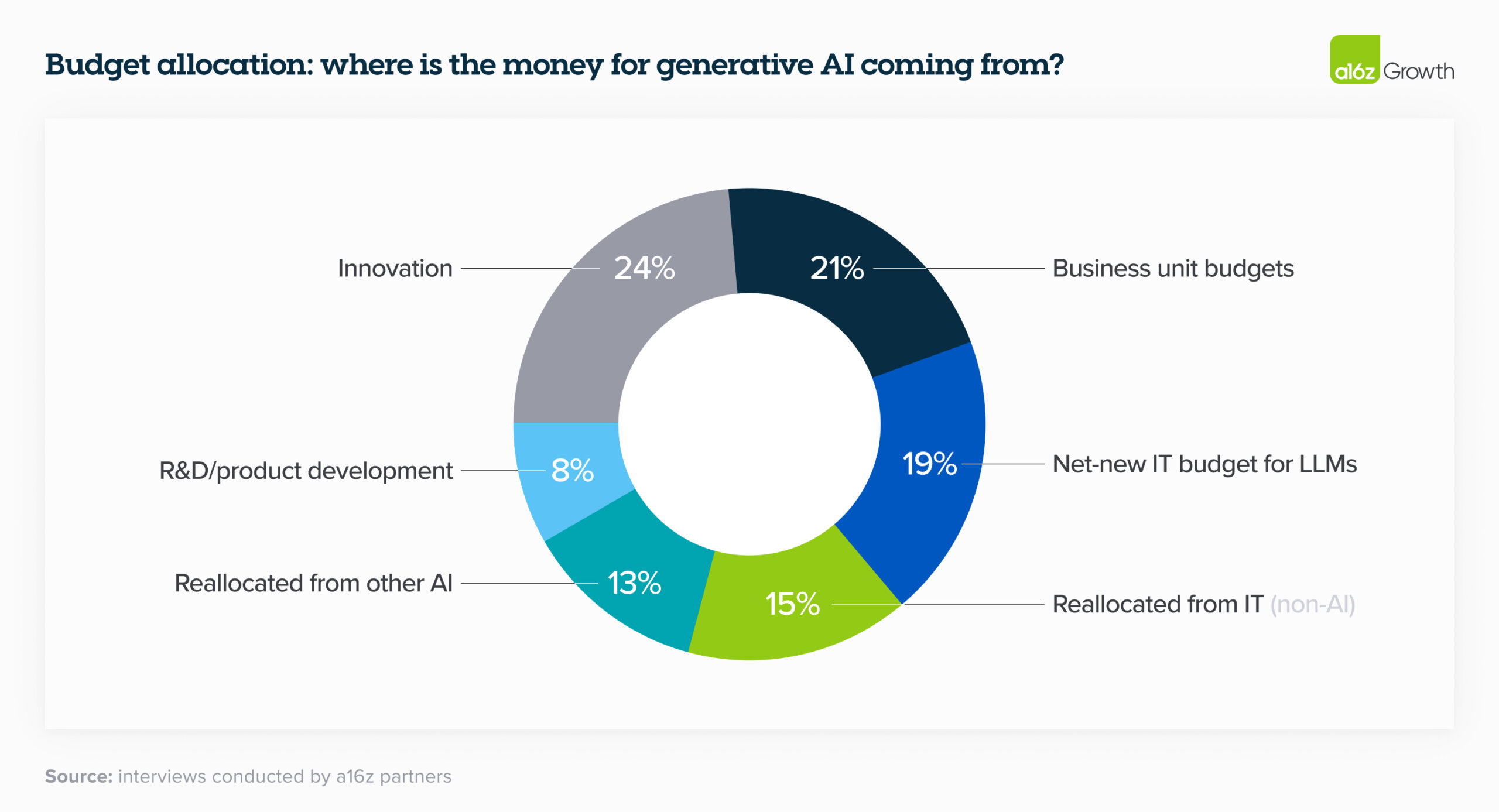

3. Measuring ROI is still an art and a science.

Enterprise leaders are currently mostly measuring ROI by increased productivity generated by AI. While they are relying on NPS and customer satisfaction as good proxy metrics, they’re also looking for more tangible ways to measure returns, such as revenue generation, savings, efficiency, and accuracy gains, depending on their use case. In the near term, leaders are still rolling out this tech and figuring out the best metrics to use to quantify returns, but over the next 2 to 3 years ROI will be increasingly important. While leaders are figuring out the answer to this question, many are taking it on faith when their employees say they’re making better use of their time.

4. Implementing and scaling generative AI requires the right technical talent, which currently isn’t in-house for many enterprises.

Simply having an API to a model provider isn’t enough

to build and deploy generative AI solutions at scale. It takes highly

specialized talent to implement, maintain, and scale the requisite computing

infrastructure. Implementation alone accounted for one of the biggest areas of

AI spend in 2023 and was, in some cases, the largest. One executive mentioned

that “LLMs are probably a quarter of the cost of building use cases,” with

development costs accounting for the majority of the budget.

In order to help enterprises get up and

running on their models, foundation model providers offered and are still

providing professional services, typically related to custom model development.

We estimate that this made up a sizable portion of revenue for these companies

in 2023 and, in addition to performance, is one of the key reasons enterprises

selected certain model providers. Because it’s so difficult to

get the right genAI talent in the enterprise, startups who offer tooling to make

it easier to bring genAI development in house will likely see faster

adoption.

Models: enterprises are trending toward a multi-model, open source world

5. A multi-model future.

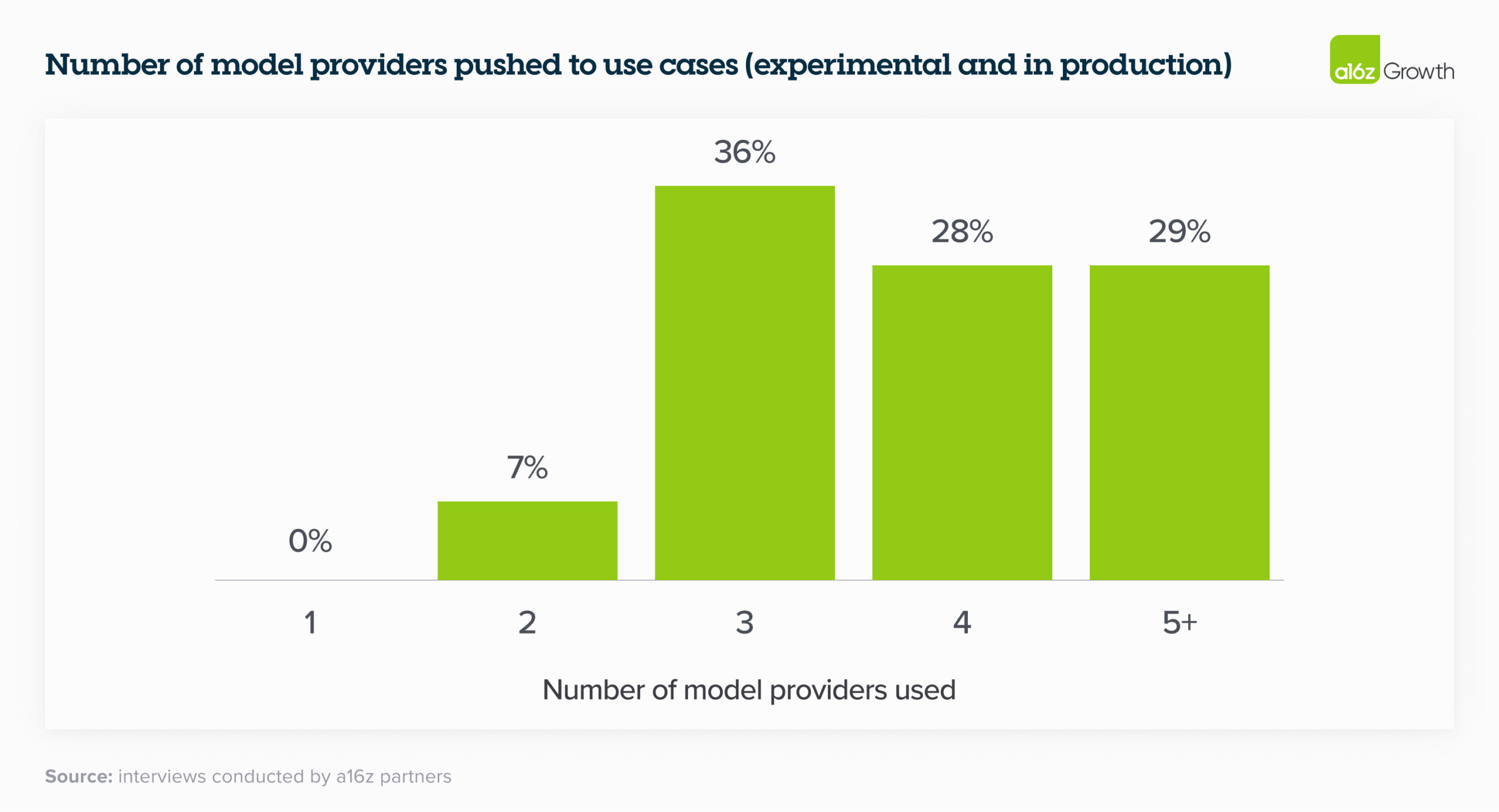

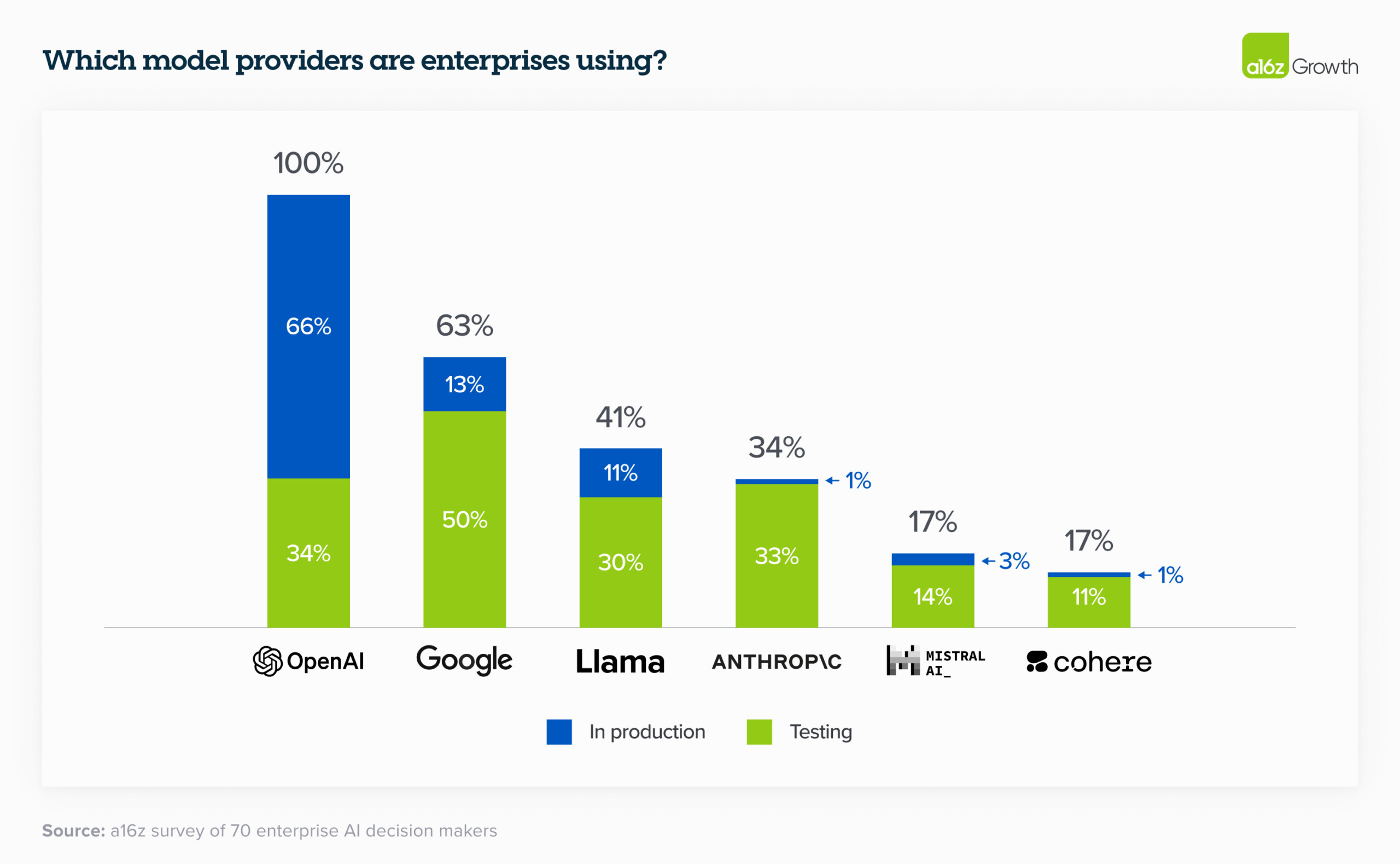

Just over 6 months ago, the vast majority of enterprises were experimenting with 1 model (usually OpenAI’s) or 2 at most. When we talked to enterprise leaders today, they’re are all testing—and in some cases, even using in production—multiple models, which allows them to 1) tailor to use cases based on performance, size, and cost, 2) avoid lock-in, and 3) quickly tap into advancements in a rapidly moving field. This third point was especially important to leaders, since the model leaderboard is dynamic and companies are excited to incorporate both current state-of-the-art models and open-source models to get the best results.

We’ll likely see even more models proliferate. In the table below drawn from survey data, enterprise leaders reported a number of models in testing, which is a leading indicator of the models that will be used to push workloads to production. For production use cases, OpenAI still has dominant market share, as expected.

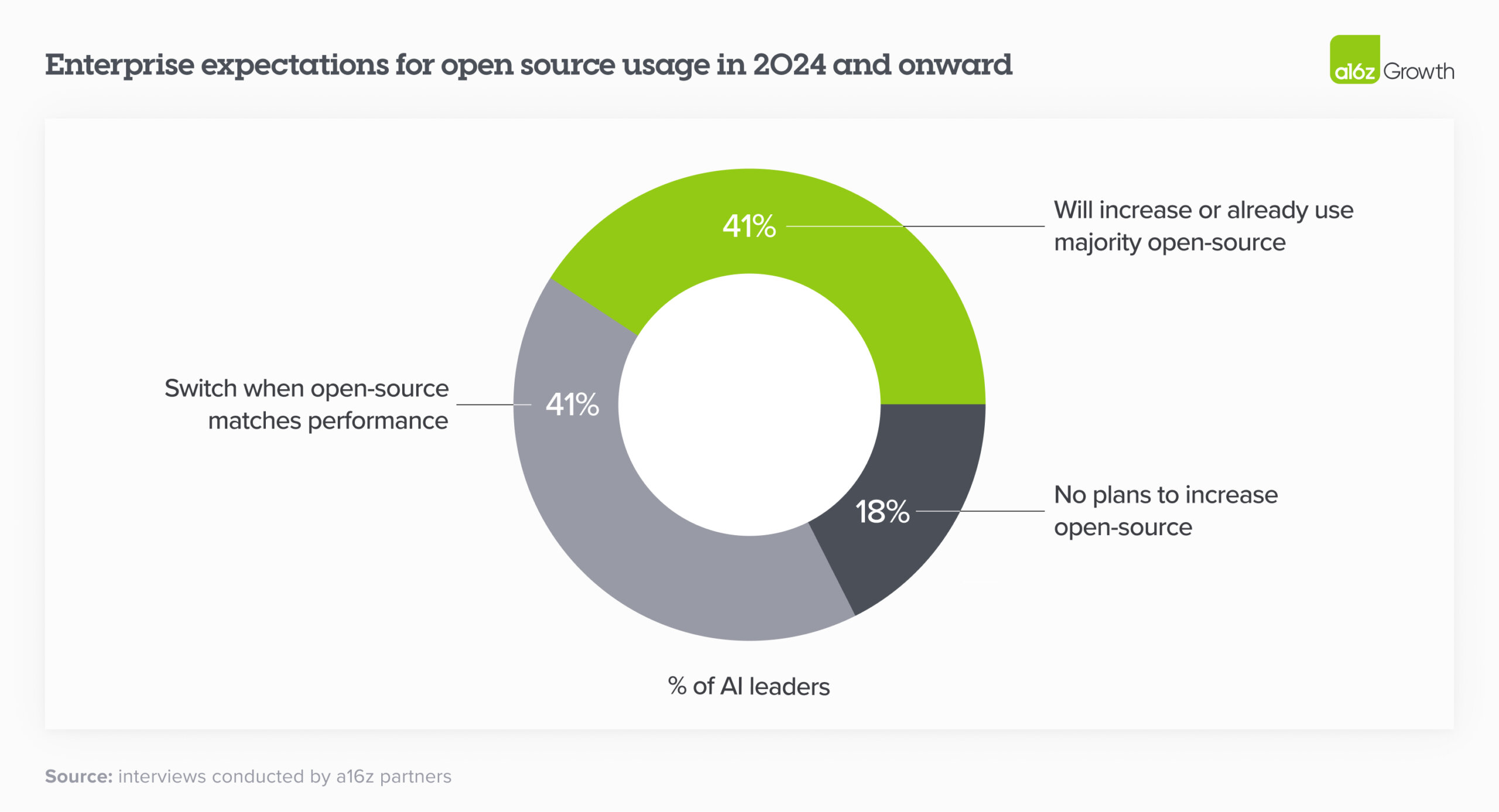

6. Open source is booming.

This is one of the most surprising changes in the landscape over the past 6 months. We estimate the market share in 2023 was 80%–90% closed source, with the majority of share going to OpenAI. However, 46% of survey respondents mentioned that they prefer or strongly prefer open source models going into 2024. In interviews, nearly 60% of AI leaders noted that they were interested in increasing open source usage or switching when fine-tuned open source models roughly matched performance of closed-source models. In 2024 and onwards, then, enterprises expect a significant shift of usage towards open source, with some expressly targeting a 50/50 split—up from the 80% closed/20% open split in 2023.

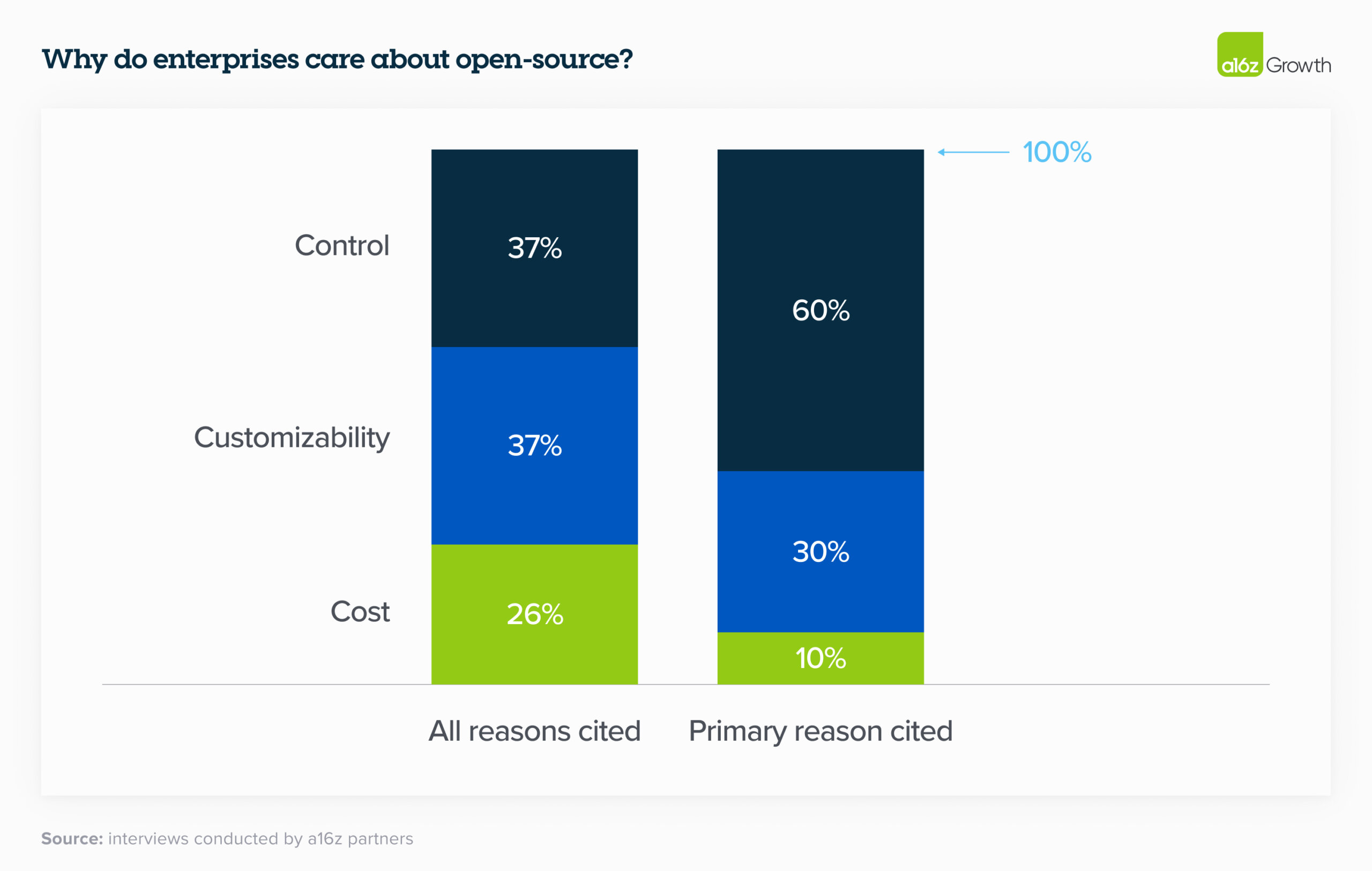

7. While cost factored into open source appeal, it ranked below control and customization as key selection criteria.

Control (security of proprietary data and understanding why models produce certain outputs) and customization (ability to effectively fine-tune for a given use case) far outweighed cost as the primary reasons to adopt open source. We were surprised that cost wasn’t top of mind, but it reflects the leadership’s current conviction that the excess value created by generative AI will likely far outweigh its price. As one executive explained: “getting an accurate answer is worth the money.”

8. Desire for control stems from sensitive use cases and enterprise data security concerns.

Enterprises still aren’t comfortable sharing their proprietary data with closed-source model providers out of regulatory or data security concerns—and unsurprisingly, companies whose IP is central to their business model are especially conservative. While some leaders addressed this concern by hosting open source models themselves, others noted that they were prioritizing models with virtual private cloud (VPC) integrations.

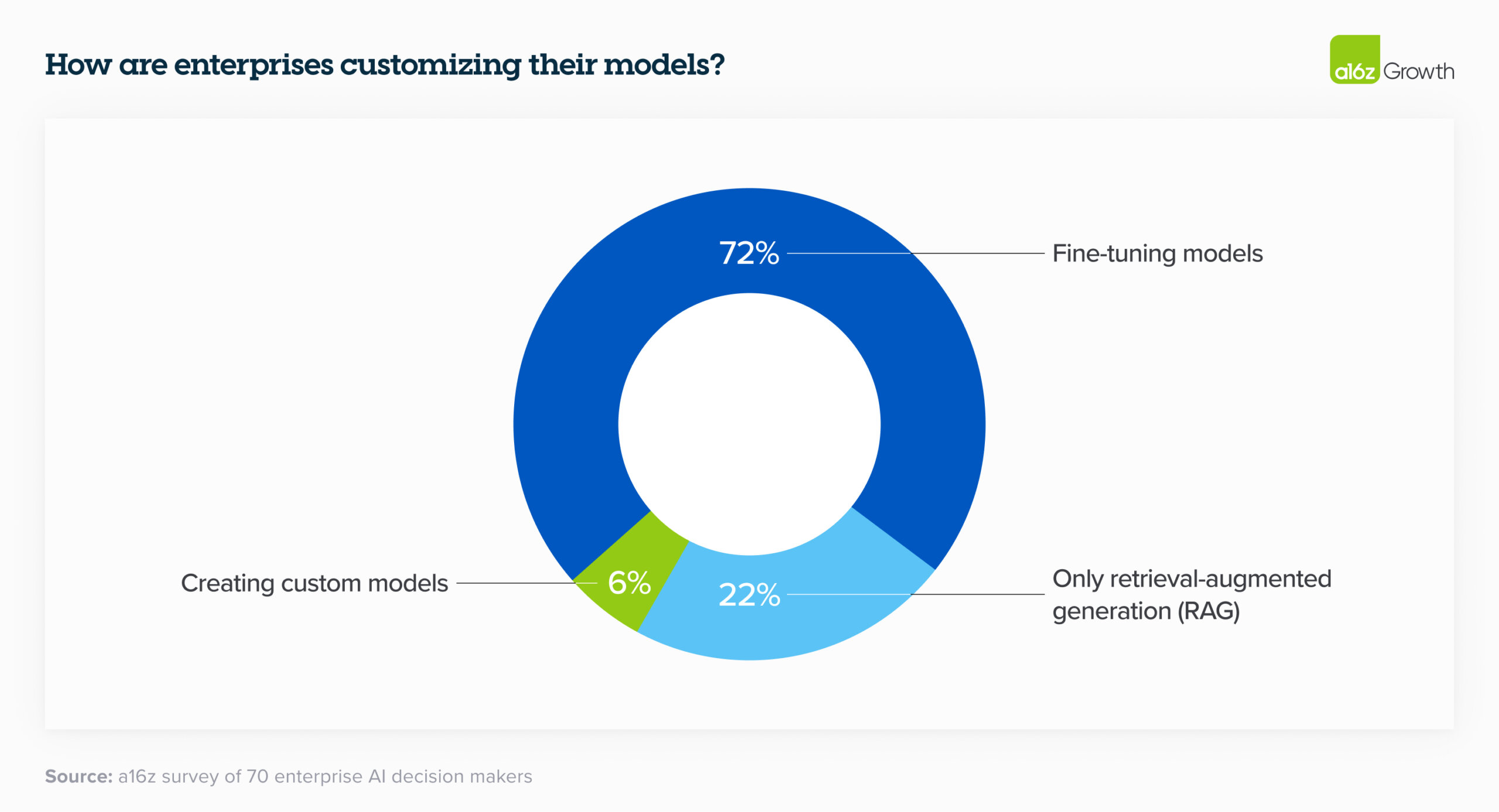

9. Leaders generally customize models through fine-tuning instead of building models from scratch.

In 2023, there was a lot of discussion around building custom models like BloombergGPT. In 2024, enterprises are still interested in customizing models, but with the rise of high-quality open source models, most are opting not to train their own LLM from scratch and instead use retrieval-augmented generation (RAG) or fine-tune an open source model for their specific needs.

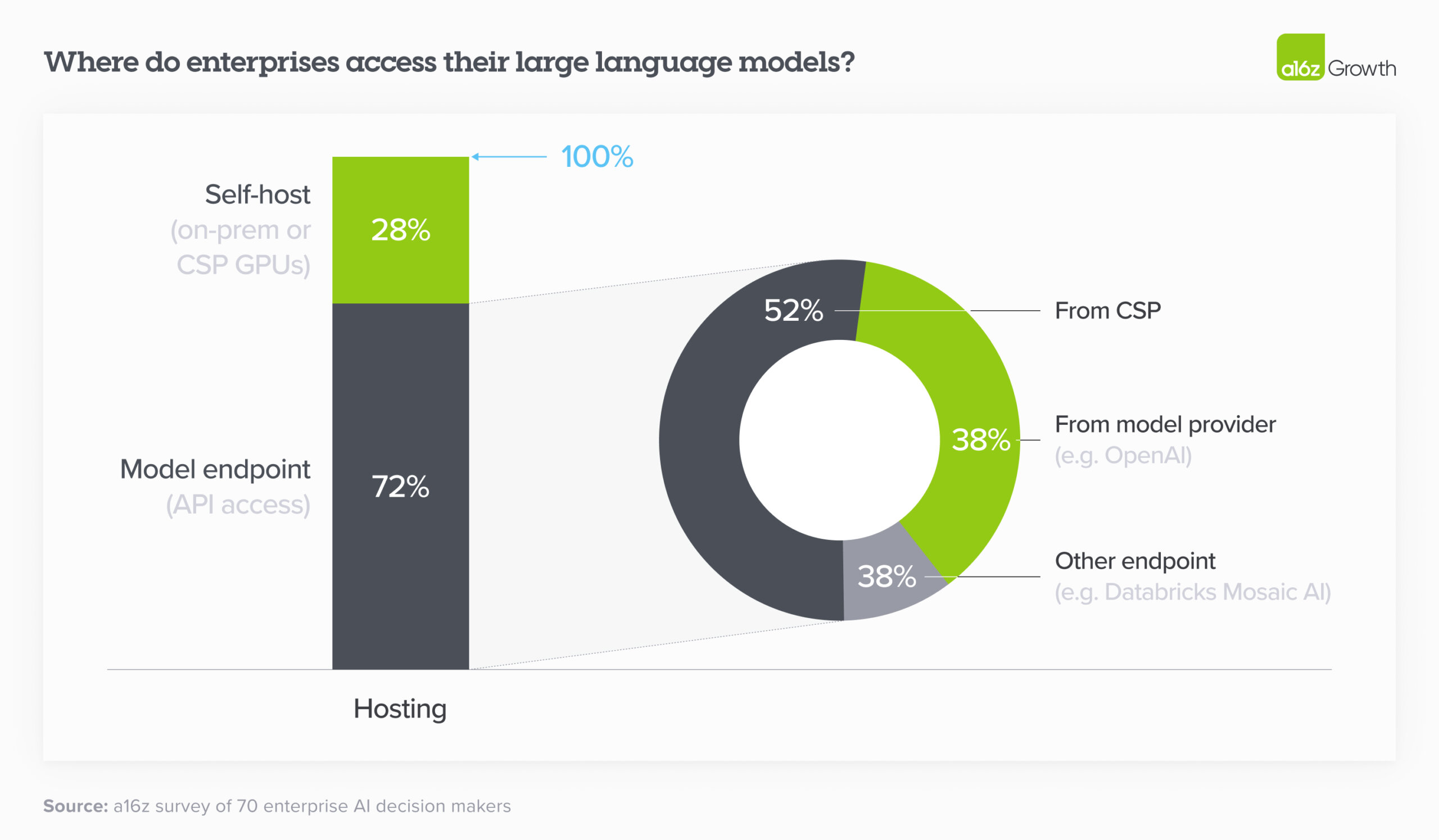

10. Cloud is still highly influential in model purchasing decisions.

In 2023, many enterprises bought models through their existing cloud service provider (CSP) for security reasons—leaders were more concerned about closed-source models mishandling their data than their CSPs—and to avoid lengthy procurement processes. This is still the case in 2024, which means that the correlation between CSP and preferred model is fairly high: Azure users generally preferred OpenAI, while Amazon users preferred Anthropic or Cohere. As we can see in the chart below, of the 72% of enterprises who use an API to access their model, over half used the model hosted by their CSP. (Note that over a quarter of respondents did self-host, likely in order to run open source models.)

11. Customers still care about early-to-market features.

While leaders cited reasoning capability, reliability, and ease of access (e.g., on their CSP) as the top reasons for adopting a given model, leaders also gravitated toward models with other differentiated features. Multiple leaders cited the prior 200K context window as a key reason for adopting Anthropic, for instance, while others adopted Cohere because of their early-to-market, easy-to-use fine-tuning offering.

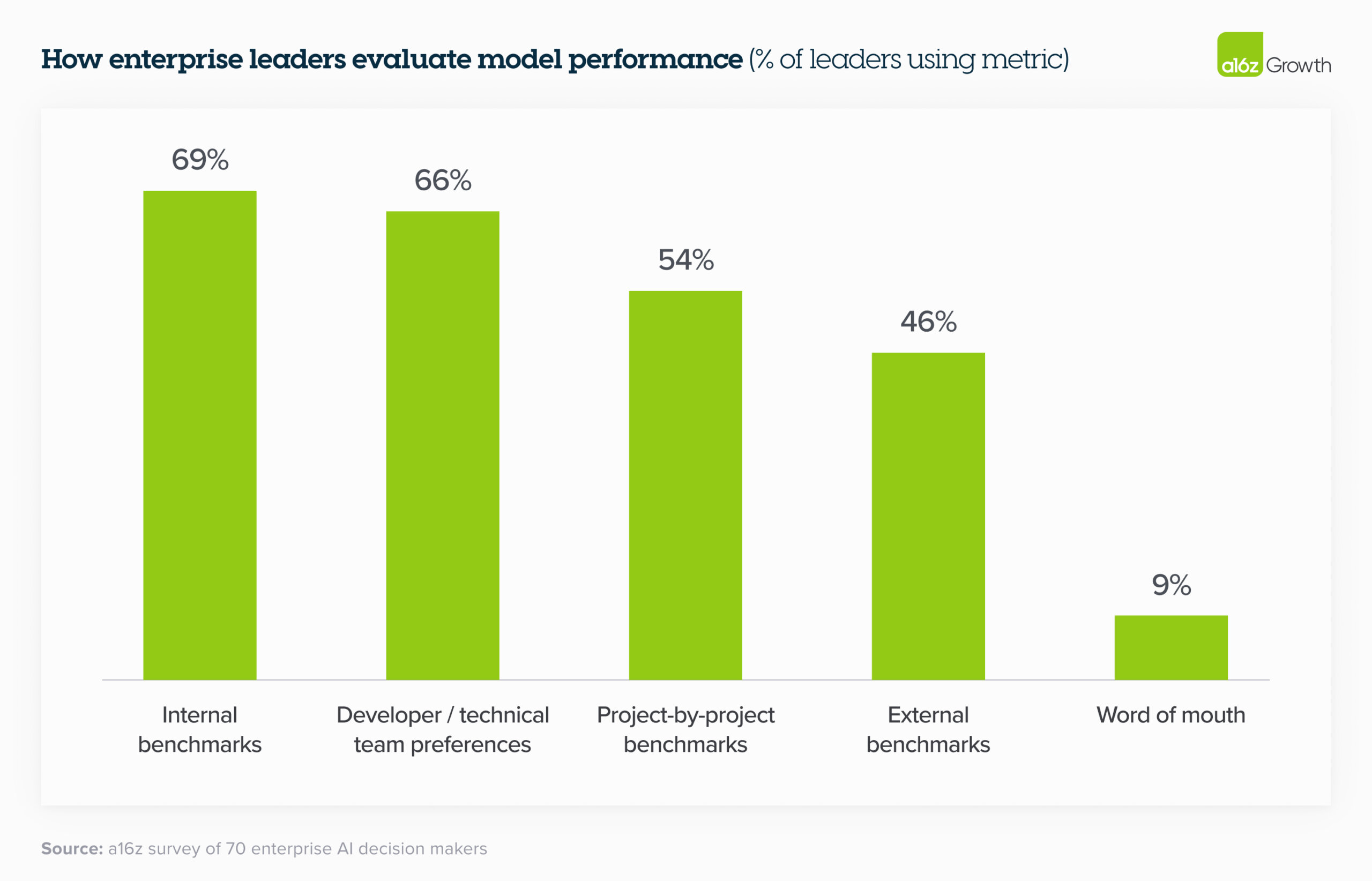

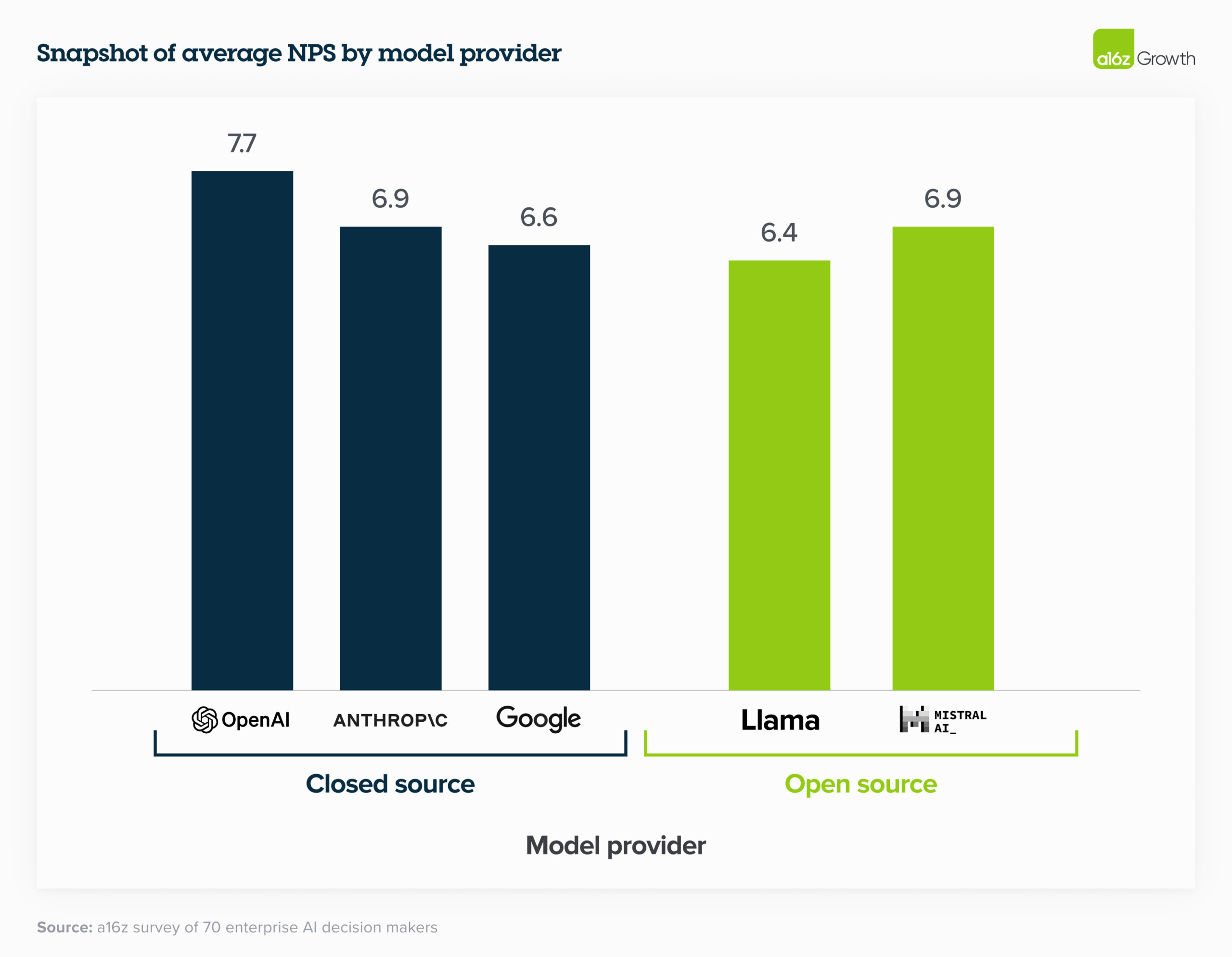

12. That said, most enterprises think model performance is converging.

While large swathes of the tech community focus on comparing model performance to public benchmarks, enterprise leaders are more focused on comparing the performance of fine-tuned open-source models and fine-tuned closed-source models against their own internal sets of benchmarks. Interestingly, despite closed-source models typically performing better on external benchmarking tests, enterprise leaders still gave open-source models relatively high NPS (and in some cases higher) because they’re easier to fine-tune to specific use cases. One company found that “after fine-tuning, Mistral and Llama perform almost as well as OpenAI but at much lower cost.” By these standards, model performance is converging even more quickly than we anticipated, which gives leaders a broader range of very capable models to choose from.

13. Optimizing for optionality.

Most enterprises are designing their applications so that switching between models requires little more than an API change. Some companies are even pre-testing prompts so the change happens literally at the flick of a switch, while others have built “model gardens” from which they can deploy models to different apps as needed. Companies are taking this approach in part because they’ve learned some hard lessons from the cloud era about the need to reduce dependency on providers, and in part because the market is evolving at such a fast clip that it feels unwise to commit to a single vendor.

Use cases: more migrating to production

14. Enterprises are building, not buying, apps—for now.

Enterprises are overwhelmingly focused on building

applications in house, citing the lack of battle-tested, category-killing

enterprise AI applications as one of the drivers. After all, there aren’t Magic

Quadrants for apps like this (yet!). The foundation models have also made it

easier than ever for enterprises to build their own AI apps by offering APIs.

Enterprises are now building their own versions of familiar use cases—such as

customer support and internal chatbots—while also experimenting with more novel

use cases, like writing CPG recipes, narrowing the field for molecule discovery,

and making sales recommendations. Much has been written about the limited

differentiation of “GPT wrappers,” or startups building a familiar interface

(e.g., chatbot) for a well-known output of an LLM (e.g., summarizing documents);

one reason we believe these will struggle is that AI further reduced the barrier

to building similar applications in-house.

However, the jury is still out on whether

this will shift when more enterprise-focused AI apps come to market. While one

leader noted that though they were building many use cases in house, they’re

optimistic “there will be new tools coming up” and would prefer to “use the best

out there.” Others believe that genAI is an increasingly “strategic tool” that

allows companies to bring certain functionalities in-house instead of relying as

they traditionally have on external vendors. Given these dynamics,

we believe that the apps that innovate beyond the “LLM + UI”

formula and significantly rethink the underlying workflows of

enterprises or help enterprises better use their own proprietary

data stand to perform especially well in this

market.

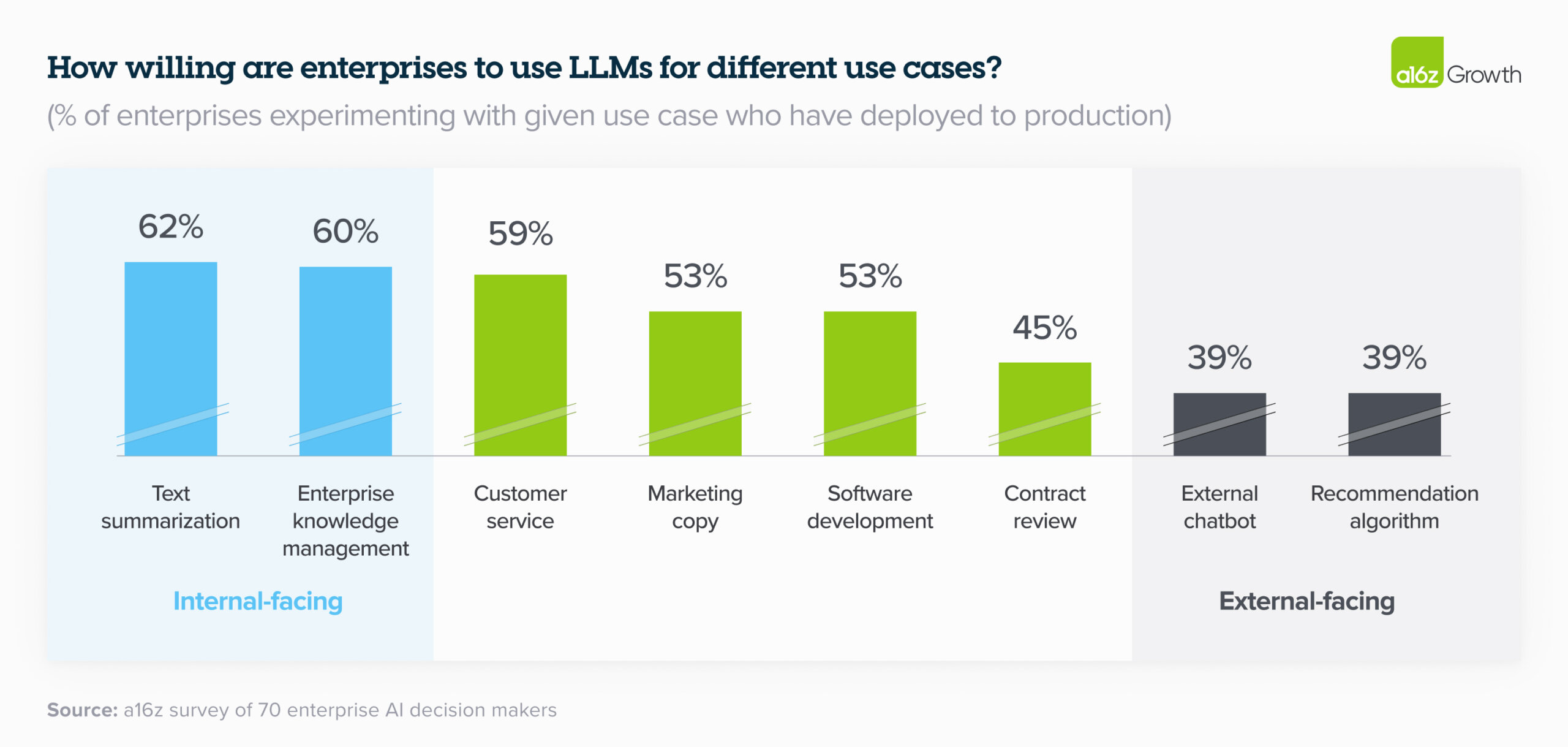

15. Enterprises are excited about internal use cases but remain more cautious about external ones.

That’s because 2 primary concerns about genAI still loom large in the enterprise: 1) potential issues with hallucination and safety, and 2) public relations issues with deploying genAI, particularly into sensitive consumer sectors (e.g., healthcare and financial services). The most popular use cases of the past year were either focused on internal productivity or routed through a human before getting to a customer—like coding copilots, customer support, and marketing. As we can see in the chart below, these use cases are still dominating in the enterprise in 2024, with enterprises pushing totally internal use cases like text summarization and knowledge management (e.g., internal chatbot) to production at far higher rates than sensitive human-in-the-loop use cases like contract review, or customer-facing use cases like external chatbots or recommendation algorithms. Companies are keen to avoid the fallout from generative AI mishaps like the Air Canada customer service debacle. Because these concerns still loom large for most enterprises, startups who build tooling that can help control for these issues could see significant adoption.

Size of total opportunity: massive and growing quickly

16. We believe total spend on model APIs and fine-tuning will grow to over $5B run-rate by the end of 2024, and enterprise spend will make up a significant part of that opportunity.

By our calculations, we estimate that the model API (including fine-tuning) market ended 2023 around $1.5–2B run-rate revenue, including spend on OpenAI models via Azure. Given the anticipated growth in the overall market and concrete indications from enterprises, spend on this area alone will grow to at least $5B run-rate by year end, with significant upside potential. As we’ve discussed, enterprises have prioritized genAI deployment, increased budgets and reallocated them to standard software lines, optimized use cases across different models, and plan to push even more workloads to production in 2024, which means they’ll likely drive a significant chunk of this growth.

Over the past 6 months, enterprises have issued a top-down mandate to find and deploy genAI solutions. Deals that used to take over a year to close are being pushed through in 2 or 3 months, and those deals are much bigger than they’ve been in the past. While this post focuses on the foundation model layer, we also believe this opportunity in the enterprise extends to other parts of the stack—from tooling that helps with fine-tuning, to model serving, to application building, and to purpose-built AI native applications. We’re at an inflection point in genAI in the enterprise, and we’re excited to partner with the next generation of companies serving this dynamic and growing market.

- 1 Per SecondMeasure and Yipit estimates.

- 2 Industries included: technology, telecom, CPG, banking, payments, healthcare, and energy.